Early this month, the Pegasus team was very excited to hear about LIGO’s incredible discovery: the first detection of gravitational waves from colliding black holes. Since then, LIGO and Pegasus have been featured in several media channels. Below, we summarize some of these publications. Additionally, we provide the link to the scientific research article that references Pegasus’ publications.

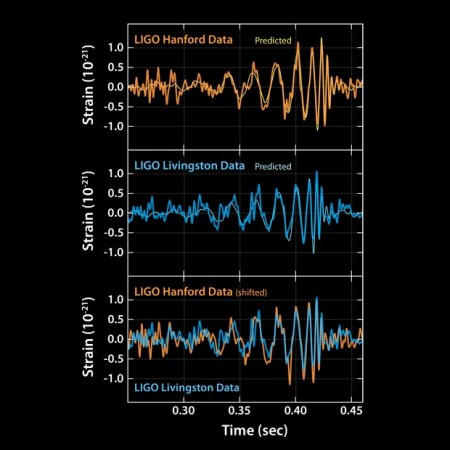

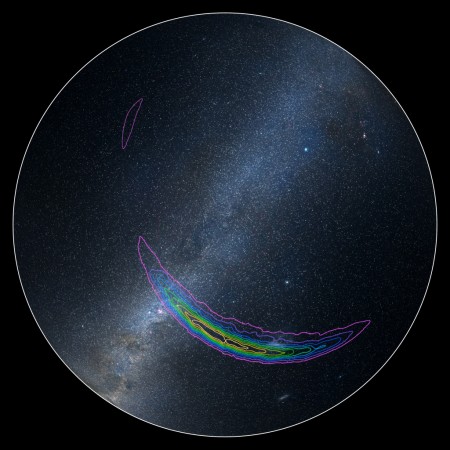

Image Credit: Caltech/MIT/LIGO Lab

GW150914: First results from the search for binary black hole coalescence with Advanced LIGO

Read the publication...For additional information on the LIGO project, please refer to their main publication (LIGO: The Laser Interferometer Gravitational-Wave Observatory) or their research group website.

LIGO and Pegasus in the media:

ISI Computer Scientists Had Behind-the-Scenes Role in LIGO Gravitational Wave Detection

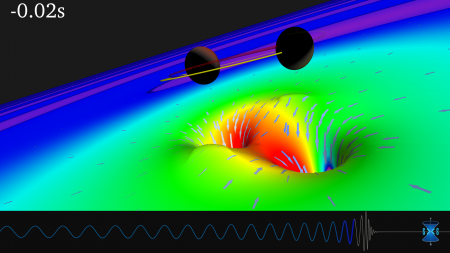

As early as 2001, the Pegasus team at the Information Sciences Institute (ISI) had embarked upon an effort to create software that would accelerate scientific discovery by enabling scientists to make computations on massive amounts of data.This week, physicists at the Laser Interferometer Gravitational-Wave Observatory (LIGO) announced a long-awaited scientific discovery: detecting gravitational waves as predicted by Einstein’s Theory of Relativity. Pegasus was ever-present behind the scenes.

OSG helps LIGO scientists confirm Einstein’s last unproven theory

“When a workflow might consist of 600,000 jobs, we don’t want to rerun them if we make a mistake. So we use DAGMan (Directed Acyclic Graph Manager, a meta-scheduler for HTCondor) or Pegasus workflow manager to optimize changes,” added Couvares. “The combination of Pegasus, Condor, and OSG work great together.” Keeping track of what has run and how the workflow progresses, Pegasus translates the abstract layer of what needs to be done into actual jobs on Condor, which then puts them out on OSG.

Read more...

USC helps discover gravitational waves with Pegasus

The process of discovering gravitational waves involved using two interferometers which utilize lasers and mirrors to measure displacements in electromagnetic waves — one at LIGO and the other with the Virgo Collaboration near Pisa, Italy. Pegasus helped analyze and sort the noise coming from the interferometers; then, once the noises were detected, it provided a way to measure whether they were significant enough to consider for deeper analysis.

OSG helps LIGO confirm Einstein’s theory

Thus far, LIGO has consumed almost four million hours on OSG — 628,602 hours were on Comet and 430,960 on Stampede resources. OSG’s Brian Bockelman of the University of Nebraska-Lincoln and Edgar Fajardo from the SDSC used HTCondor software to help LIGO implement their Pegasus workflow on 16 clusters at universities and national labs across the US.

Read more...

Caltech wasn’t the only SoCal school helping discover gravitational waves

When the news finally broke several months after that initial detection, it wasn’t just Caltech celebrating. A team at USC were also basking in the glow of a job well done. Deelman’s team designed a program called Pegasus that helped LIGO researchers manage data and streamline workflow. That has came in handy since there were around a thousand researchers working on the project from around the globe, and they needed to share complicated information.

The Pegasus Workflow Manager and the Discovery of Gravitational Waves

We have all heard so much about the wonderful discovery of Gravitational Waves – and with just cause! In today’s post, I want to give a shout-out to the Pegasus Workflow Manager, one of the crucial pieces of software used in analyzing the LIGO data. Processing these data requires complex workflows involving transferring and managing large data sets, and performing thousands of tasks.

Read more...

High Throughput Computing helps LIGO confirm Einstein’s last unproven theory

I think the automation and the reliability provided by Pegasus and HTCondor are key to enabling scientists to focus on their science, rather than the details of the underlying cyber-infrastructure and its inevitable failures

OSG integrates global computing to support detection of colliding neutron stars by LIGO, VIRGO, and DECam

LIGO’s scientific application software uses the Pegasus workflow manager on top of HTCondor and the OSG resource provisioning system to accomplish global science.

8,368 views